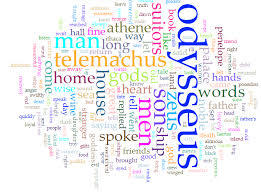

Text mining, text data mining or text analytics is the process of deriving high-quality information from text. It involves "the discovery by computer of new, previously unknown information, by automatically extracting information from different written resources."A typical analysis task is taking the text of a document and counting the total number of words, counting the number of unique words, computing the frequency of individual words (possibly excluding some very common words), etc. Before computers, such work was extremely tedious, and scholars spent years performing such analysis on popular books such as the Bible or Homer's Iliad. With computers, it's something that any beginning programmer can do (even in a weekly project).

This assignment will involve three tasks:

Task 1: Building a Word Frequency Dictionary. In this task you'll write a function to build a dictionary that associates each word from the text with the number of times that word appears, except that some very common words such and 'the' and 'is' will be excluded. Here is the header for your function:

def createDictionary( filename ):

"""Create a dictionary associating each word in a text file with the

number of times the word occurs. Also count the total number of

words and the number of unique words in the text. Certain very

common words are not included in the dictionary, but are counted.

Return a triple: (wordCount, uniqueWordCount, dictionary)."""

Your function should perform the following steps:

Here are the words to exclude when creating your dictionary:

['a', 'about', 'after', 'all', 'also', 'am', 'an', 'and', 'any', 'are', 'as', 'at', 'back', 'be', 'because', 'but', 'by', 'can', 'come', 'could', 'day', 'do', 'even', 'first', 'for', 'from', 'get', 'give', 'go', 'good', 'had', 'have', 'he', 'her', 'him', 'his', 'how', 'i', 'if', 'in', 'into', 'is', 'it', 'its', 'just', 'know', 'like', 'look', 'make', 'man', 'me', 'men', 'most', 'my', 'new', 'no', 'not', 'now', 'of', 'on', 'one', 'only', 'or', 'other', 'our', 'out', 'over', 'people', 'said', 'say', 'see', 'she', 'so', 'some', 'take', 'than', 'that', 'the', 'their', 'them', 'then', 'there', 'these', 'they', 'think', 'this', 'time', 'to', 'two', 'up', 'us', 'use', 'want', 'was', 'way', 'we', 'well', 'went', 'were', 'what', 'when', 'which', 'who', 'will', 'with', 'work', 'would', 'year', 'you', 'your']Don't change this list, because then your counts won't match ours. This list and the function cleanLine described below are here: Code for Project.

Task 2: Writing the Text Analysis Functions. To perform analyses on the dictionary you created in Task 1, you'll write the following functions:

def sortByFrequency( dict ):

"""Return a list of pairs of (count, word)

sorted by count in descending order. I.e.,

the most frequent word should be first in the

list."""

pass

# Think about how to use the function sortByFrequency

# for this one.

def mostFrequentWords( dict, k ):

"""Return a list of the k most frequently occurring

words."""

pass

def sortByWordLength( dict ):

"""Return a list of pairs of (length, word)

sorted by length in descending order. I.e.,

the longest word should be first in the list."""

pass

# Think about how to use the function sortByWordLength

# for this one.

def longestWords( dict, k ):

"""Return a list of the k longest words in the

text."""

pass

# Think about how to use the function sortByWordLength

# for this one.

def shortestWords( dict, k ):

"""Return a list of the k shortest words in the

text."""

pass

Hint: Suppose L is a list of pairs of the form (num, word); you

can sort them in reverse (descending) order with the command:

L.sort( reverse = True )This will sort them lexicographically, i.e, by the number first and then alphabetically by the words if the numbers are equal.

Task 3: Write a main() function to Produce and Print Statistics on the Text. See the Sample Output below for what is required in your report. Your main() function should do the following:

Three files on which to test your code are here: MLK's I Have a Dream Speech, Homer's Odyssey, Bret Harte's The Luck of Roaring Camp.

def cleanLine( s ):

"""Given a string s, remove designated punctuation and convert others:

non-ascii single quotes to ascii equivalents; underscore and dash

to space."""

# Create a translation table that maps any character in string

# toRemove to a None. Also translates the non-ascii single quote

# to an ascii single quote and underscore/dash to blank.

toTranslate = "\u2018\u2019\u2010\u2014\u2012-"

translateTo = "'' "

toRemove = ".,;:?$()[]\u201C\u201D\u00A3"

translationTable = str.maketrans(toTranslate, translateTo, toRemove)

# Use the translate() method to apply the mapping to string s

translatedText = s.translate(translationTable)

# print("Translated Text:", translatedText)

return translatedText

The function cleanLine and the list of excluded words is

here: Code for Project.

> python AnalyzeText.py

Enter a filename: MLKDreamSpeech.txt

Text analysis of file: MLKDreamSpeech.txt

Total word count: 1627

Unique word count: 535

10 most frequent words:

[ freedom, negro, let, ring, dream, every, nation, today, satisfied, must ]

10 longest words:

[ discrimination, tranquilizing, righteousness, nullification, interposition,

tribulations, proclamation, pennsylvania, mountainside, invigorating ]

10 shortest words:

[ jr, ago, bad, cup, end, god, has, hew, let, low ]

> python AnalyzeText.py

Enter a filename: Odyssey.txt

Text analysis of file: Odyssey.txt

Total word count: 118079

Unique word count: 6653

10 most frequent words:

[ ulysses, house, has, own, son, did, upon, tell, telemachus, been ]

10 longest words:

[ straightforwardly, inextinguishable, notwithstanding, extraordinarily, disrespectfully,

accomplishments, pyriphlegethon, laestrygonians, interpretation, embellishments ]

10 shortest words:

[ o, v, x, 'i, ii, iv, ix, mt, oh, ox ]

> python AnalyzeText.py

Enter a filename: luck-of-roaring-camp.txt

Text analysis of file: luck-of-roaring-camp.txt

Total word count: 4169

Unique word count: 1475

10 most frequent words:

[ camp, roaring, stumpy, luck, been, kentuck, perhaps, child, river, cabin ]

10 longest words:

[ unsatisfactory, rehabilitation, characteristic, apostrophizing, unfortunately,

unconsciously, transgression, tranquilizing, superstitious, problematical ]

10 shortest words:

[ b, d, n, o, ab, ef, ex, o', oo, ace ]

>

BTW: the Odyssey is broken into "books" which are numbered with Roman

numerals. That's why you see words like "v", "x", and "ix".

Update: There was some weirdness in some of the above files, especially Luck of Roaring Camp. I suggest you get your program working on the following simple file: syllabus.txt. It's a very simple file without any non-ascii characters. Remember not to count numbers as words. Here is the result of running my code on this file:

Enter a filename: syllabus.txt

Text analysis of file: syllabus.txt

Total word count: 1020

Unique word count: 412

10 most frequent words:

[ class, ta, don't, programming, course, students, may, grade, find, won't ]

10 longest words:

[ computationally, understanding, spring2026pdf, uninterested, quantitative,

professional, discontinued, collectively, circumstance, asynchronous ]

10 shortest words:

[ cs, dr, ed, ta, ask, bit, did, due, got, let ]

If your code works on this one, you're good. Don't stress about the

results for the others.

Your file must compile and run before submission. It must also contain a header with the following format:

# Assignment: HW11 # File: AnalyzeText.py # Student: # UT EID: # Course Name: CS303E # # Date: # Description of Program:

In Task 2 above, you're asked to write five functions. But once you've written sortByFrequency and sortByWordLength, the others are really pretty simple. But if you had to write the others from scratch, you'd be hard pressed.

New programmers often avoid writing functions, thinking it's just a waste of time. Considering what functions to define is often one of the most useful and efficient things you can do when writing a program of any size. Without functional abstraction, it would be almost impossible to have ever written the huge programming systems that power our lives.