The Pyramid Match:

Efficient Matching for Retrieval and

Recognition

Kristen Grauman and Trevor Darrell

It is often

useful to represent a single example by the collection of local features or

parts that comprise it. For instance, in computer vision, an image may be

described by local features extracted from patches around salient interest

points, or a shape may be described by local descriptors defined at edge

points. Likewise, in natural language processing, documents or topics may be

represented by sets or bags of words; in computational biology, a disease may

be characterized by sets of gene-expression data from multiple patients.

However, measuring similarity between examples represented in this way is

challenging, since sets may vary in cardinality and elements lack a meaningful

ordering.

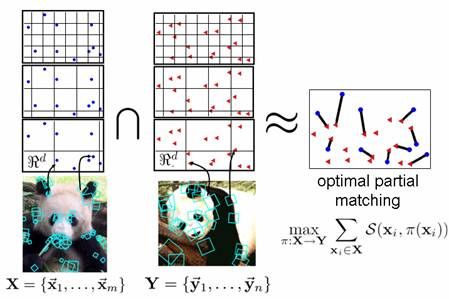

In this work we

develop an efficient method to form a partial matching between two sets of

feature vectors. We show how this matching may be used as a robust measure of

similarity to perform content-based image retrieval, as well as a basis for

learning object categories or inferring 3D pose. We call our approach the pyramid match, since we use a multi-resolution histogram pyramid in

the feature space to implicitly form a feature matching.

The matching has

linear time complexity, naturally forms a Mercer kernel, and is robust to

clutter or outlier features, a critical advantage for handling images with

variable backgrounds, occlusions, and viewpoint changes. This dramatic increase in performance enables

accurate and flexible image comparisons to be made on large-scale data sets,

and removes the need to artificially limit the size of images' local

descriptions. As a result, we can now

access a new class of applications that relies on the analysis of rich visual

data, such as place or object recognition and meta-data labeling. We provide results on several important

vision tasks, including our algorithm's state-of-the-art recognition

performance on a challenging data set of object categories.

Code:

libpmk:

A Pyramid Match Toolkit

Relevant papers and links:

-

K. Grauman and T. Darrell. The

Pyramid Match Kernel: Discriminative Classification with Sets of Image

Features. In Proceedings of the IEEE International Conference on

Computer Vision (ICCV),

Beijing, China, October 2005. (updated results on Caltech-101 are here)

We

introduce the pyramid match algorithm and show applications for discriminative

object recognition. We show the kernel

function is positive-definite, making it valid for use in learning algorithms

whose optimal solutions are guaranteed only for Mercer kernels. We demonstrate our method on object

recognition tasks and show it to be accurate and dramatically faster than

current approaches.

-

Object

recognition results on the Caltech 101 data set

-

K. Grauman and T. Darrell. The

Pyramid Match Kernel: Efficient Learning with Sets of Features. Journal of Machine Learning Research (JMLR),

8 (Apr): 725--760, 2007.

Journal paper expanding on the ICCV

2005 paper, which includes bounds for the error of the pyramid match relative

to the optimal partial matching cost, and results using the PMK for regression.

-

K. Grauman and T. Darrell. Unsupervised

Learning of Categories from Sets of Partially Matching Image Features. In Proceedings

of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR),

We

develop an unsupervised method for learning object categories from unlabeled

images. Using our pyramid match

algorithm, feature sets are embedded into a space where they cluster according

to their partial-match feature correspondences.

A spectral clustering technique is used to recover the primary groupings

among the images. We introduce an

efficient means of refining these groupings according to intra-cluster

statistics over the subsets of features selected by the partial matches between

the images, and based on an optional, variable amount of user supervision. We compute the consistent subsets of feature

correspondences within a grouping to infer category feature masks. The output of the algorithm is a partition of

the data into a set of learned categories, and a set of classifiers trained from

these ranked partitions that can recognize the categories in novel images.

- K. Grauman and T.

Darrell. Approximate

Correspondences in High Dimensions. In

Advances in Neural Information Processing Systems (NIPS), December 2006.

We

introduce the vocabulary-guided

pyramid match, a novel pyramid embedding based on a hierarchy of non-uniformly

shaped bins that takes advantage of the underlying structure of the feature space

and remains accurate even for sets with high-dimensional feature vectors.

Whereas previous matching approximation algorithms suffer from distortion

factors that increase linearly with the feature dimension, we demonstrate that

our approach can maintain constant accuracy even as the feature dimension

increases.

-

K. Grauman and T. Darrell. Pyramid

Match Hashing: Sub-Linear Time Indexing Over Partial Correspondences. In Proceedings

of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR),

We show how to perform sub-linear time

indexing with the pyramid match kernel as the similarity measure using

randomized locality-sensitive hash functions.

-

K.

Grauman. Matching

Sets of Features for Efficient Retrieval and Recognition, Ph.D. Thesis,

MIT, 2006. (35.8 MB)

Presentations:

-

Slides

from ICCV 2005 talk (ppt, 7.88 MB, best viewed

with Office XP)

introduces the pyramid match kernel and shows applications

for discriminative object recognition

-

Slides

from recent colloquia (zipped ppt, 26 MB)

longer talk describing the pyramid match and applications to

image retrieval, object recognition, pose estimation, and category discovery