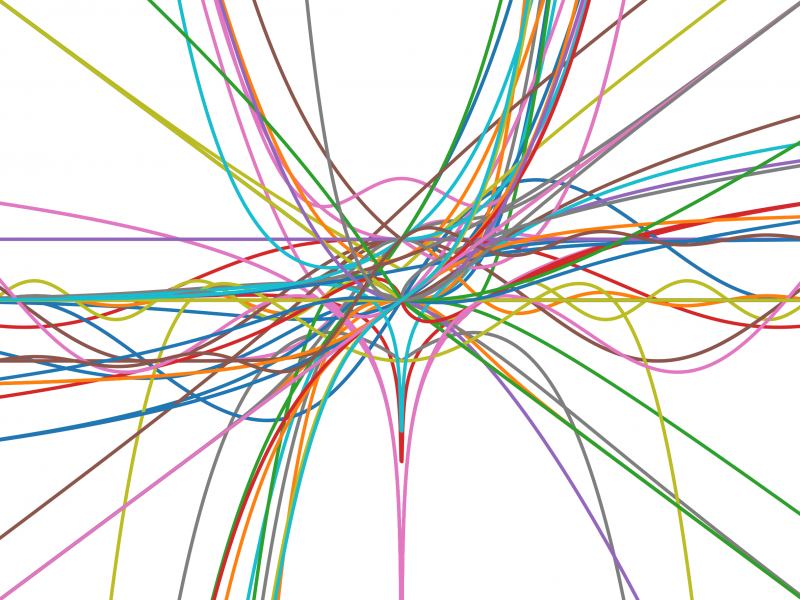

Plot of the activation functions the researchers discovered

Artificial Intelligence (AI) is a rapidly evolving field, with advancements occurring every day. While the idea of an artificial intelligence system may conjure images of an autonomous machine that rattles out facts like a hi-tech encyclopedia, complex AI exists only because a countless number of talented individuals dedicate their time toward refining these systems. Texas Computer Science (TXCS) graduate students Garrett Bingham and William Macke, under the advisement of TXCS professor Risto Miikkulainen, are contributing to the improvement of AI with their research. Their paper, entitled “Evolutionary Optimization of Deep Learning Activation Functions” was accepted into the 2020 Genetic and Evolutionary Computation Conference. The work concerns the evolutionary optimization of activation functions as a potential means of improving neural networks, which may ultimately lead to the creation of smarter and more accurate AI.

A popular technique in machine learning is the use of neural networks: powerful tools that are able to learn complicated information by looking at and assessing vast amounts of training data. The TXCS researchers were focused on a specific part of the network: the activation function. This is the part that allows the system to learn complicated relationships from the training data. “It's similar to a biological neuron's action potential that determines whether a neuron in the brain fires or not. The difference is that in an artificial neural network, we have the flexibility to choose different activation functions,” said Bingham. While machine learning researchers can pick any number of these functions, the team noticed that researchers tended to stick with “a handful of activation functions that usually work pretty well.” After all, why fix something that’s not broken?

Limiting the scope of activation functions used in research can hurt innovations in the machine learning space. Activation functions are crucial to developing more complex and more efficient AI systems, but despite this acknowledgment, there remained a gap in the research space. “There isn't much research that looks at ways of discovering new activation functions that are better than the ones we currently use now,” commented Bingham.

The research team decided to discover new activation functions by exploring how biological processes could be applied to these artificial systems. They randomly generated about a hundred activation functions, analyzed their performance, and then created new, better functions based on the best-performing ones. In short, it’s survival of the fittest. Bingham said, “By simulating survival of the fittest and genetic mutation, after a few generations of evolution we are able to discover dozens of new activation functions that are better than the ones machine learning practitioners typically use.”

As they delved further into their research, the team found that the activation functions they developed through evolution were “often quite complicated and unlikely to be discovered by humans” and could increase a “neural network’s accuracy by a few percentage points.” They also observed that rather than using the same activation function in every case, their evolution method discovered functions that were tailored for specific tasks, such as image classification or machine translation. Bingham is optimistic that their research can be a great asset to machine learning researchers, because “instead of spending months trying to discover ways to improve their AI systems, we can do it automatically using our algorithm.”

“Evolutionary Optimization of Deep Learning Activation Functions” will be presented in early July at the 2020 Genetic and Evolutionary Computation Conference.